New research shows how deep learning models trained for network intrusion detection can be bypassed

Recent years have seen a growing interest in the use of machine learning and deep learning in cybersecurity, especially in network intrusion detection and prevention.

However, according to a study by researchers at the Citadel, a military college in South Carolina, US, deep learning models trained for network intrusion detection can be bypassed through adversarial attacks, specially crafted data that fools neural networks to change their behavior.

DNS amplification attacks

The study (PDF) focuses on DNS amplification, a kind of denial-of-service attack in which the attacker spoofs the victim’s IP address and sends multiple name lookup requests to a DNS server.

The server will then send all the responses to the victim. Since a DNS request is much smaller than the response, it results in an amplification attack where the victim is flooded with bogus traffic.

RELATED Adversarial attacks against machine learning systems – everything you need to know

“We decided to study deep learning in DNS amplification due to the increasing popularity of machine learning-based intrusion detection systems,” Jared Mathews, the lead author of the paper, told The Daily Swig.

“DNS amplification is one of the more popular and destructive forms of DoS attacks so we wanted to explore the viability and resilience of a deep learning model trained on this type of network traffic.”

Attacking the model

To test the resilience of network intrusion detection systems, the researchers created a machine learning model to detect DNS amplification traffic.

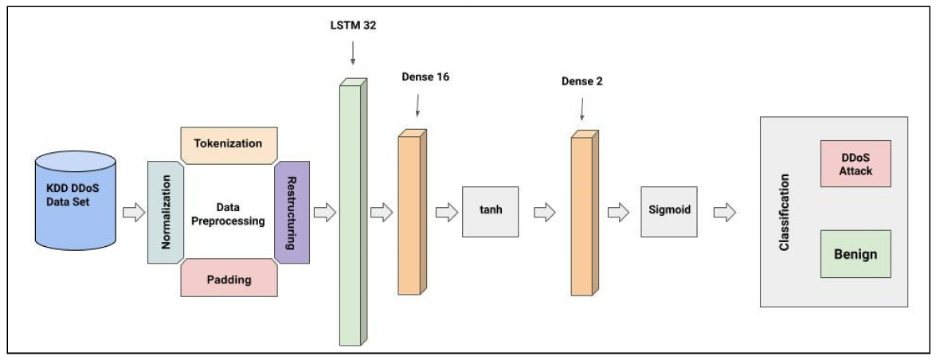

They trained a deep neural network on the open source KDD DDoS dataset. The model achieved above 98% accuracy in detecting malignant data packets.

Architecture of machine learning model for DNS amplification attacks

Architecture of machine learning model for DNS amplification attacks

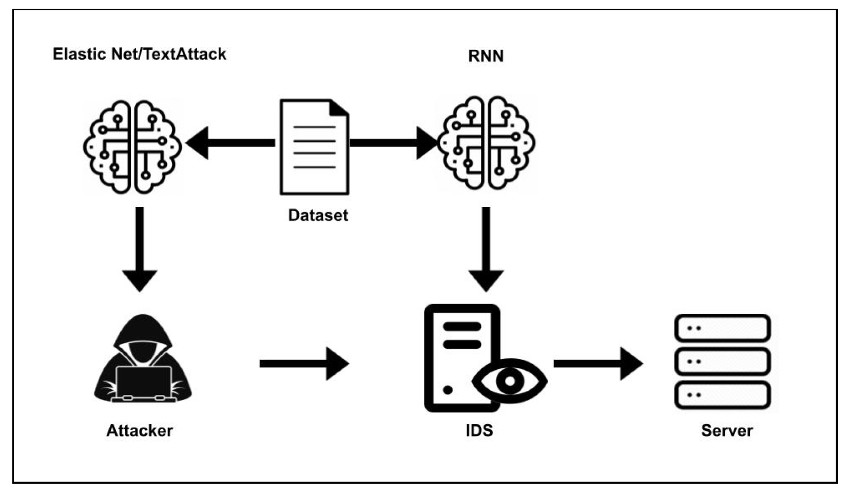

To test the resilience of the ML-based network intrusion detection system, the authors pitted it against Elastic-Net Attack on Deep Neural Networks (EAD) and TextAttack, two popular adversarial attack techniques.

“We chose TextAttack and Elastic-Net Attack due to their proven results in both natural language processing and image processing respectively,” Mathews said.

Read more of the latest information security research news

Although the attack algorithms were not initially intended to be applied to network packets, the researchers were able to adapt them for the purpose. They used the algorithms to generate DNS amplification packets that passed as benign traffic when processed by the target NIDS system.

Both attack techniques proved to be effective, significantly reducing the network intrusion detection system’s accuracy, and causing large amounts of both false positives and false negatives.

“While both attacks could easily generate adversarial examples with the DNS Amplification data we used, TextAttack was more suited for minimally perturbing the datatypes in the packet features,” Mathews said.

The researchers have not yet tested the attack on off-the-shelf intrusion detection systems, but plan to do so and report the findings in the future.

Structure of adversarial attack against ML-based network intrusion detection system

Complexities of using ML in cybersecurity

The researchers conclude that it is relatively easy to deceive a machine learning network intrusion detection systems with adversarial attacks, and it is possible to take adversarial algorithms that were initially meant for another application and adapt them to network classifiers.

“The biggest takeaway would be that using deep learning in network security is not a simple solution, and as a standalone NIDS, they are quite fragile,” Mathews said.

“For a classifier used to detect attacks on critical networks, there should be extensive testing. Using these DL models in conjunction with a rules-based NIDS as a secondary detector can prove to be very effective as well.”

The team is in the process of expanding their findings to other kinds of attacks, including IoT DDoS traffic.

READ MORE Zyxel firewall vulnerabilities left business networks open to abuse