Behind the scenes at the world’s most popular security vulnerability scoring system

CVSS, the widely-used IT vulnerability scoring system, celebrates its 15th anniversary in 2019.

Used by security researchers and organizations around the world, CVSS (the Common Vulnerability Scoring System) provides an open framework for communicating the characteristics and impact of security vulnerabilities.

It was introduced by the National Infrastructure Advisory Council (NIAC) in 2004, and in 2005 the NIAC asked the non-profit Forum of Incident Response and Security Teams (FIRST) to be its custodian.

As the ubiquitous rating system toasts 15 years of operation, The Daily Swig spoke to CVSS co-chairs Dave Dugal and Darius Wiles, along with FIRST.org director Maarten Van Horenbeeck, about keeping score of vulnerabilities in an ever-changing landscape, and the future of the CVSS.

Could you provide a brief history of FIRST.org and how the CVSS is used by the security community?

Dave Dugal: This rating system was designed to provide open and universally standard severity ratings of software vulnerabilities. Acknowledging a critical need to help organizations appropriately prioritize security vulnerabilities across their constituency, the lack of a common scoring system had security teams worldwide solving the same problems with little or no coordination. FIRST worked closely with CERT/CC and MITRE to develop and promote CVSS.

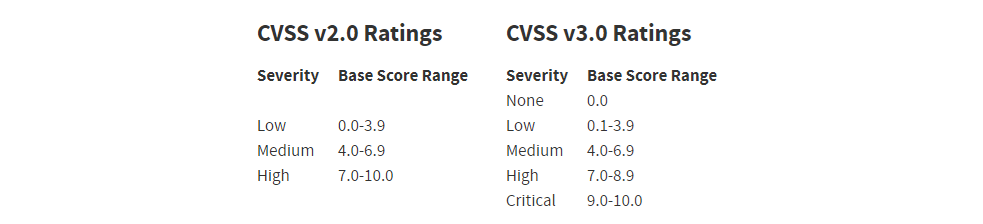

The initial CVSS v1 design was not subjected to mass peer review across multiple organizations or industries. After production use, feedback from the CVSS Special Interest Group (SIG) and others indicated there were significant issues with the initial draft of CVSS. To help address these, and increase the accuracy of CVSS, the CVSS-SIG began scoring vulnerabilities and comparing scores examining inconsistencies and authoring amendments to fix them. When the CVSS-SIG reached consensus around a particular problem or potential enhancement, it voted to approve, discard, or send the amendment back to committee for further discussion and work. The last round of voting ended June 1, 2007, with CVSS v2 being published soon after.

CVSS aims to provide an objective measure of the severity of security vulnerabilities. It is widely used in the security community, with many product vendors releasing CVSS scores with security patches. Several organizations collate scores and provide a single resource for viewing and searching scores across products. Organizations can also use CVSS as an input to a higher-level risk assessment methodology, to help determine threats to their computing environments and help them prioritize remediation activities.

What were the main focuses or considerations of FIRST.org when creating the CVSS system? How much focus do you place on usability? Was there a trade-off between ease of use and accuracy?

DD: FIRST has used input from industry subject-matter experts to continue to enhance and refine CVSS to be more and more applicable to the vulnerabilities, products, and platforms being developed over the past 15 years and beyond.

The primary goal of CVSS is to provide a deterministic and repeatable way to score the severity of a vulnerability across many different constituencies. Taking the fundamental elements of a vulnerability’s Exploitability Metrics measured in concert with its Impact Metrics, a deterministic value from 0 to 10 can be derived, allowing consumers of CVSS to use this score as input to a larger decision matrix of risk, remediation, and mitigation specific to their particular environment and risk tolerance.

Usability is a prime consideration when making CVSS improvements. Several changes being made in CVSS v3.1 are to improve the clarity of concepts introduced in CVSS v3.0, and thereby improve the overall ease of use of the standard.

How has the scoring system evolved over time? Are there plans for a v4.0 any time soon?

Darius Wiles: The CVSS-SIG is currently working on CVSS v3.1 which will be released this year. One of the more significant changes is the introduction of the Extensions Framework, which provides a way to add and modify metrics for particular use cases. CVSS measures characteristics common to most security vulnerabilities, but particular industries may wish to measure additional factors, such as physical damage, and the Extensions Framework provides guidance on how to do this.

After the release of CVSS v3.1, work will begin on CVSS v4.0. This will incorporate larger changes, such as the addition of completely new metrics, and is expected to take a few years to complete. A list of work items that will be considered for future revisions to the standard can be found here.

Looking back to 2018, has FIRST.org noticed any trends relating to the vulnerability and threat landscape? Do you expect these trends to continue through 2019?

DW: As physical devices increase in sophistication there is a growing need to be able to assess the physical impact caused by successful exploits of security vulnerabilities. In 2018 we saw interest from the healthcare and industrial control system sectors to include physical factors in severity scores, and the trend is likely to continue into 2019 with maybe sectors such as automotive also exploring the use of CVSS.

What are the main challenges facing the curators of CVSS, and how are they working to overcome these hurdles?

DW: One challenge is expanding CVSS to meet the needs of more industry sectors while keeping the standard simple. The CVSS-SIG includes participants from many industry sectors, and we are hoping to widen this to include people with security expertise in the healthcare, industrial control systems, and automotive industries to ensure the standard works well across all industries.

As a non-profit, FIRST.org isn't able to provide a monetary reward for its vulnerability disclosure program – how do you encourage researchers to partake in lieu of any financial motivation?

Maarten Van Horenbeeck: You are correct that FIRST, as a non-profit, doesn’t have significant funds available to invest in large monetary rewards for our bug bounty program. However, the security of our systems and information is a priority, which is why we launched our bug bounty program in 2017. In response to valid and confirmed vulnerability reports, FIRST provides a token of our appreciation, which typically is a gift card to an online shopping site.

As you can see from our site, so far we have issued around 40 of these in response to many different issues that have been brought to our attention. We’ve actually found the security researcher community to be incredibly helpful and understanding of the fact that we’re unable to provide large bounties. In practice, we find that many of them are eager to help us create a more secure environment for our users. They gladly accept the gift card, but typically are more interested in making sure we properly fix the issue they report.

An interesting learning from our program has been that the most difficult challenge has not been motivating researchers to help us – but working with them so they understand our constraints.

As with most programs, our capacity to properly and rapidly address issues turned out to be a challenge. During the launch we learned a lot, and saw how reports often came in simultaneously, stretching our resources. Our technology team has leveraged these learnings to grow our capability, respond more effectively to security issues, and build better security controls over time that reduce the impact of many vulnerabilities reported.