Implementation flaws in quantum key distribution systems can undermine claims of ‘unhackable’ cryptographic security, one expert warns

Academics at the University of Bristol recently claimed to have made a breakthrough in making quantum key distribution (QKD) systems commercially viable at scale.

Using a technique known as ‘multiplexing’, the team has developed a prototype system that relies on fewer receiver boxes, potentially slashing the cost of building quantum key distribution systems currently used by only governments and large multinational banks.

However, following the recent publication of an article in The Daily Swig, Taylor Hornby, senior security engineer at Electric Coin Company, has been in touch to caution us that comparable systems have been broken in the past because of implementation problems.

“If they’re claiming higher security than standard cryptography, they need evidence they’re less likely to have implementation flaws,” Hornby told us before offering a lengthier explanation of his thinking (reproduced in full, with light editing) below.

‘More evidence required’ – Taylor Hornby on quantum encryption and security

It’s technically correct that when “implemented correctly”, quantum key distribution leverages the laws of physics to ensure that “data being transmitted cannot be intercepted and hacked”.

However, that “implemented correctly” is a pretty big assumption. Similar systems in the past have been broken through implementation flaws, so if the researchers are claiming higher security than standard cryptography, they need evidence they’re less likely to have implementation flaws.

Everyone’s almost certainly better off using normal crypto that’s post-quantum secure and paying (a fraction of) the £300,000 cost to people to audit it.

Common narrative

A common narrative in favor of QKD is that it’s more secure than conventional cryptography because it doesn’t need to rely on computational difficulty assumptions (like factoring is hard, it’s hard to find SHA256 collisions, and so forth).

It’s true that QKD eliminates the need to rely on those computational hardness assumptions, but that comes at an additional risk of implementation flaws.

Implementations of conventional cryptography can have implementation flaws, too (e.g. Heartbleed, Zombie Poodle, and many other examples). However they’re usually just software mistakes that can be patched, and there’s an industry of cryptographers and security auditors trained to find and fix them.

Over time, the flaws get found and fixed, and the implementations become more secure.

Read more of the latest encryption news

Note that it’s very rare for conventional cryptography to be broken because of weaknesses in the computational hardness assumptions.

MD5 and SHA1 collisions are two examples, but consider that AES and even DES are not showing substantial signs of weakness, and even MD5 is still secure against second-preimage attacks.

Quantum systems, on the other hand, can have physical vulnerabilities that come from the fact that real single-photon detectors and other components don’t behave exactly as their theoretical models predict.

Single-photon detectors

In one case, researchers were able to control single-photon detectors in a QKD system by shining bright light on them (making them behave more like brightness sensors than single-photon detectors).

A defense for this attack was proposed, which was to vary the detectors’ efficiency randomly. The idea is that the bright light coming from an attacker will always set off the detector, but if there weren’t an attack, then more photons should be lost when the efficiency is low, so the recipient can tell if they’re being attacked when they don’t see a higher rate of lost photons.

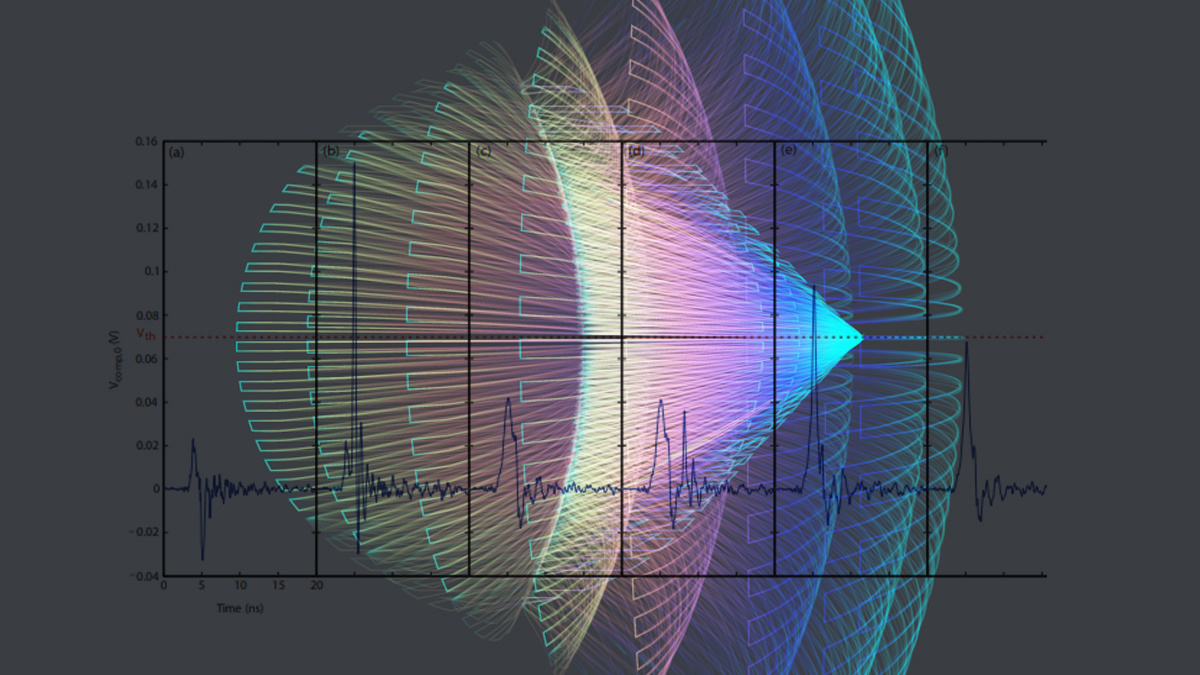

Researchers then worked out a way around that defense: By offsetting the timing of short pulses against the timing of a gate clock in the detector, they could trigger the detector just when the efficiency was high and not when it was low, so they could simulate the expected lost photons:

These attacks are on older QKD systems, and I haven’t looked into the architecture used by researchers quoted in the article, but this shows that QKD systems can have their own kinds of physical flaws, and the risk they introduce needs to be balanced against the benefits of moving away from reliance on computational hardness assumptions.

The burden is on QKD proponents to argue that their physical devices are less likely to contain vulnerabilities than software implementations of conventional cryptography systems.

A potential way to do that is to use device-independent QKD protocols – protocols which are proven secure even when the attacker is allowed to have some control over the physical hardware.

Current designs for device-independent protocols are less efficient, however, and they still make assumptions about what the attacker is allowed to do.

Those assumptions need to be tested adversarially before we can be confident in the implementation’s security.

READ MORE Quantum leap forward in cryptography could make niche technology mainstream