The classic attack vector is here to stay

Four new variants of HTTP request smuggling attacks were disclosed at Black Hat USA yesterday (August 6).

Speaking at Black Hat, which this year takes place virtually, Amit Klein, vice president of security research at SafeBreach, described new methods to employ the long established attack on modern web servers.

HTTP request smuggling was discovered in 2005 (PDF) by Klein, Chaim Linhart, Ronen Heled, and Steve Orrin.

The attack vector is a class of web security vulnerability that can lead to a variety of problems, including cache poisoning, session hijacking, and the circumvention of security filters.

PortSwigger web security researcher James Kettle previously demonstrated how isolated HTTP requests can be manipulated to desynchronize message processing.

In May, penetration tester Robin Verton and Telekom Security researcher Simon Peters explored how reverse proxies can be abused to mount an HTTP header smuggling attack.

This attack technique can be utilized to bypass authentication checks, steal data, compromise back-end systems, hijack user requests, poison web caches, and more.

RELATED Black Hat 2020: Web cache poisoning offers fresh ways to smash through the web stack

Klein has now demonstrated four new variants of the old attack which work against various proxy-server and proxy-proxy setups in what the researcher describes as “any or all HTTP devices”.

The research scope included Apache, Abyss, IIS, Nginx, Node.js, Tomcat, Squid, Caddy, and other server setups in web server and HTTP proxy modes.

The first variant, “Header SP/CR junk”, uses a payload made up of Content-Length headers that rely on systems accepting what is thought to be multiple Content-Length headers.

Squid ignores this, for example, whereas Abyss accepts them and converts the payload into a header, potentially leading to successful cache poisoning.

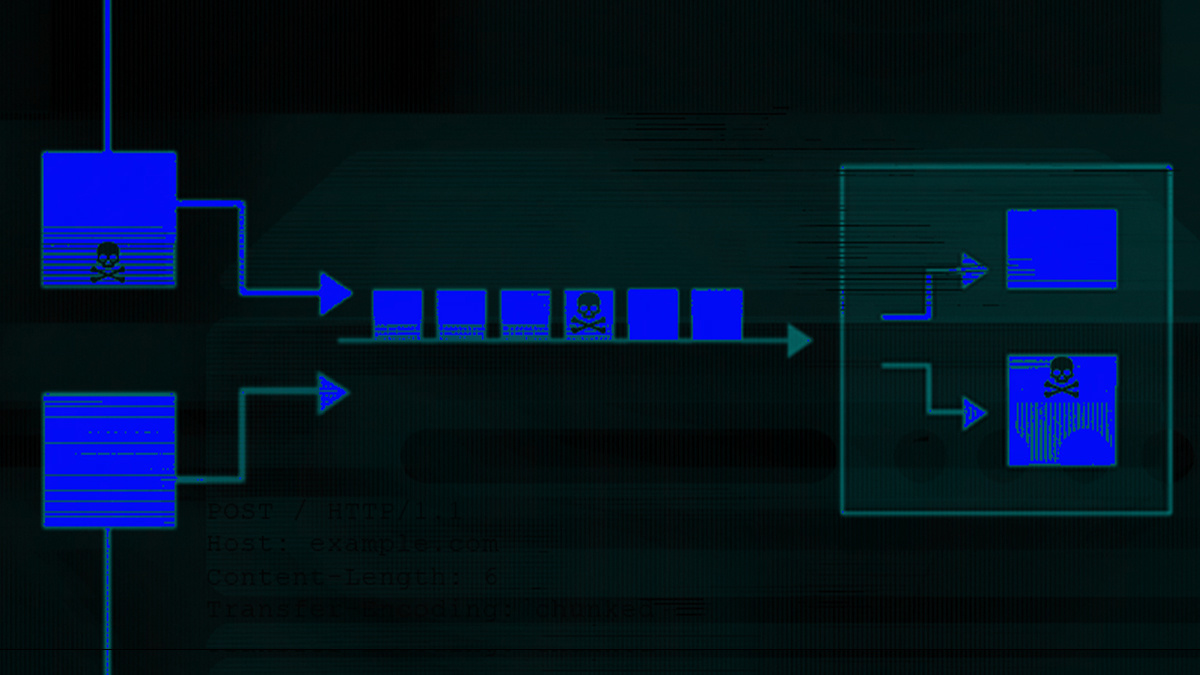

The four new variants work against various proxy-server and proxy-proxy setups

The four new variants work against various proxy-server and proxy-proxy setups

In the second attack variation, “Wait for it”, Klein attempted to hand Abyss a single Content-Length header during an HTTP request smuggling attempt.

The researcher realized that when Abyss receives a request with a body less than the Content-Length value, a delay of 30 seconds is imposed before it attempts to fulfill the request.

“It ignores the remaining body of the request (that is, it reads it and silently discards it) when it is finally sent,” Klein explained. “This way, I can use the above techniques without the valid Content-Length header.”

The third variant, “HTTP/1.2 to bypass mod_security CRS- like defense”, aims to bypass security rules like those set by the OWASP ModSecurity Core Rule Set (CRS), an open source set of attack detection rules to protect against the OWASP Top Ten threats, including HTTP request smuggling.

Security bypass

Klein found that it is possible to bypass mod_security-defenses (CRS 3.2.0 detection rules) via HTTP/1.2. Among the core ruleset is 920 (920180), which only blocks variant two, and 921 (921110) that only affects the first two attack methods if CR or LF control codes are used.

Rule 921 (921150) aims to prevent protocol attacks by detecting newlines in argument names.

While this rule blocks all of the researcher’s attacks, by moving CR and LF to a parameter value rather than parameter name, this leaves only a simple rule, 921130, to bypass.

Read more of the latest news from Black Hat 2020

921130 was originally designed to stop HTTP response splitting. However, as many servers will service HTTP/1.2 requests as if they are HTTP/1.1, a variation of HTTP request smuggling, as described in 2015 research, can be applied to bypass this security rule and achieve a successful attack.

Another interesting variant, “a plain solution”, abuses Content-Type text/plain to bypass paranoia_level≤2 checks, which do not appear to check arguments used for text/plain.

At Black Hat USA, Klein also documented the successful use of a CR header attack – which was listed as theoretical but demonstrated as variant five – which is listed in the Burp HTTP request smuggling module as “0dwrap”.

Klein reported his findings to associated vendors. The first and second, and the previously theoretical fifth, variants were fixed by Aprelium (Abyss X1) in v2.14 and Squid has been informed of the first and fifth issues as an impacted organization. OWASP CRS has resolved variant three and four in v3.3.0-rc2.

Risk factor

Speaking to The Daily Swig, Klein said that estimating the risk of this attack is “difficult”.

He said: “The risk stems from combinations of web proxies and web servers that together form vulnerable systems.

“One of the alarming properties of HTTP Request Smuggling vulnerabilities is that a web site may run server A, and a client side ISP/enterprise/university may run caching forward proxy B, and this combination (A+B) is vulnerable to HTTP Request Smuggling.

“Yet how can the website know about this, and mitigate this situation, without knowing all the proxy servers in use by all its clients? So, estimating the risk is very difficult, especially without complete knowledge of all the servers on the internet.”

Klein added: “As this is an infrastructure problem (not an application problem), I recommend talking to your web server / proxy server / WAF vendors and making sure their products are up to date and secure against HTTP Request Smuggling.”

READ MORE How to perform an HTTP header smuggling attack through a reverse proxy