Renowned researcher James Kettle demonstrates his latest attack technique in Las Vegas

A new class of HTTP request smuggling attack allowed a security researcher to compromise multiple popular websites including Amazon and Akamai, break TLS, and exploit Apache servers.

Speaking at Black Hat USA yesterday (August 10), James Kettle unveiled research that opens the new frontier in HTTP request smuggling – browser-powered desync attacks.

The briefing and it’s whitepaper, titled ‘Browser-Powered Desync Attacks: A New Frontier in HTTP Request Smuggling’, builds on Kettle’s previous research into desync attacks.

Read more of the latest news from Black Hat USA

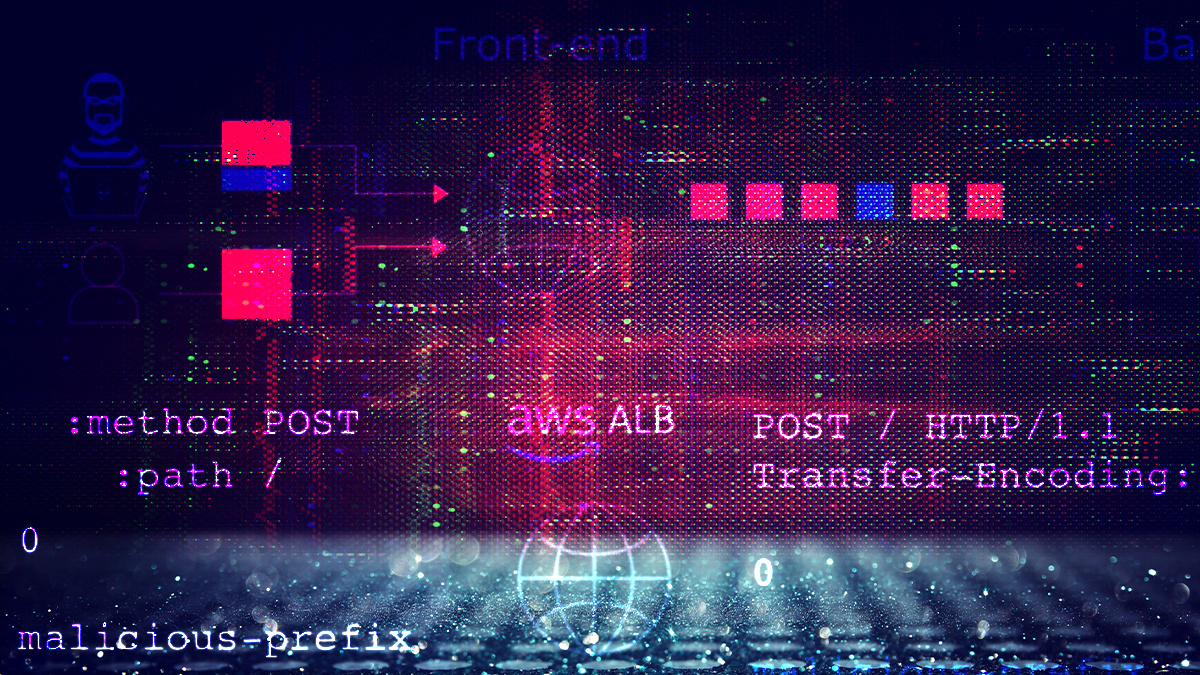

Traditional desync attacks poison the connection between a front-end and back-end server and are therefore impossible on websites that don’t use a front-end/back-end architecture.

However this new technique causes a desync between the front-end and the browser, allowing an attacker to “craft high-severity exploits without relying on malformed requests that browsers will never send”, Kettle noted.

This can expose a whole new range of websites to server-side request smuggling and enables an attacker to perform client-side variations of these attacks by inducing a victim’s browser to poison its own connection to a vulnerable web server.

Kettle demonstrated how he was able to turn a victim’s web browser into a desync delivery platform, shifting the request smuggling frontier by exposing single-server websites and internal networks.

He was able to combine cross-domain requests with server flaws to poison browser connection pools, install backdoors, and release desync worms – in turn compromising targets including Amazon, Apache, Akamai, Varnish, and multiple web VPNs.

Discovery

Kettle told attendees at the 25th anniversary of the annual hacking conference that four separate vulnerabilities led to the discovery of browser-powered desync attacks.

The first, involving request validation, leverages a technique in which an attacker can use two requests down the same connection with a valid host header in order to gain access to the host in the second request, because the reverse proxy only validates the first host.

The second, first-request routing, is a closely related flaw which occurs when the front-end uses the first request’s Host header to decide which back-end to route the request to, and then routes all subsequent requests from the same client connection down the same back-end connection.

Kettle also discovered a technique to detect connection-locked request smuggling by using a delay and reading the data early to decide if the front-end is using the Content-Length header.

James Kettle presents at the annual hacking conference held in Las Vegas

James Kettle presents at the annual hacking conference held in Las Vegas

If it is using the Content-Length it will time out, which will signify the difference between connection-locked HTTP/1 request smuggling and harmless HTTP pipelining.

A fourth vulnerability caused a desync known as CL.0/H2.0. Kettle was able to use this to compromise Amazon users’ accounts, enabling him to steal users’ requests and add them to his shopping list. He could capture all their requests, including tokens which could have enabled him to impersonate those users.

Speaking to The Daily Swig, Kettle said: “I was really surprised that it was possible to cause a CL.0 desync and also a client-side desync using a legitimate, valid HTTP request.

“It’s understandable when servers get confused by requests that use header obfuscation to hit edge-cases, but getting desync’d by a completely valid, RFC-compliant HTTP request is something else.”

‘Much cooler’ exploit

Kettle reported this issue to Amazon, which fixed it, but he soon realized that he had “made a terrible mistake and missed out on a much cooler potential exploit”.

“The attack request was so vanilla that I could have made anyone’s web browser issue it using fetch(),” Kettle noted in a whitepaper.

“By using the HEAD technique on Amazon to create an XSS gadget and execute JavaScript in victim’s browsers, I could have made each infected victim re-launch the attack themselves, spreading it to numerous others.

“This would have released a desync worm – a self-replicating attack which exploits victims to infect others with no user-interaction, rapidly exploiting every active user on Amazon.

“I wouldn’t advise attempting this on a production system, but it could be fun to try on a staging environment. Ultimately this browser-powered desync was a cool finding, a missed opportunity, and also a hint at a new attack class.”

ALSO READ Ancient technique tears a hole through modern web stacks at Black Hat 2019

Most server-side desyncs can only be triggered by a custom HTTP client issuing a malformed request, but as Kettle proved with Amazon, it is sometimes possible to create a browser-powered server-side desync.

This enables exploitation of single-server websites, which Kettle noted “is valuable because they’re often spectacularly poor at HTTP parsing”.

“A client-side desync attack starts with the victim visiting the attacker’s website, which then makes their browser send two cross- domain requests to the vulnerable website,” Kettle explained.

“The first request is crafted to desync the browser’s connection and make the second request trigger a harmful response, typically giving the attacker control of the victim's account.”

BACKGROUND Black Hat USA: HTTP/2 flaws expose organizations to fresh wave of request smuggling attacks

Kettle also demonstrated how he was able to carry out a pause-based desync attack, which occurs if a server doesn’t close a connection when timing out. If an attacker issues half of the request and pauses, the server times out and leaves the socket open. They can then issue the second half of the request that is issued as a new request.

Kettle also demonstrated how he was able to perform a client-side pause-based desync attack, where he broke TLS performing a manipulator-in-the-middle (MiTM) attack but instead of trying to decrypt the traffic, caused a delay when a specific packet size is encountered which can cause a client-side pause desync attack, which he successfully carried out on Apache.

He also automated detection of these client-side and identified a range of real vulnerable websites, including Akamai, Cisco’s web VPN, and Pulse Secure VPN.

Kettle told The Daily Swig that he plans to do a few followup research drops continuing the request smuggling theme over the next couple of months, but that his next major research project “will target something entirely different”.

The full whitepaper is available here.