Burp's current Spider tool has a primitive login capability, in that you can configure a username and password that will be submitted in any login forms. You can do a bit better with macros and session handling rules, but this only works with a single login account at any one time.

Burp's new crawler hugely improves the handling of application logins. It lets you configure multiple logins for different user roles within the application. It also supports self-registration of user accounts.

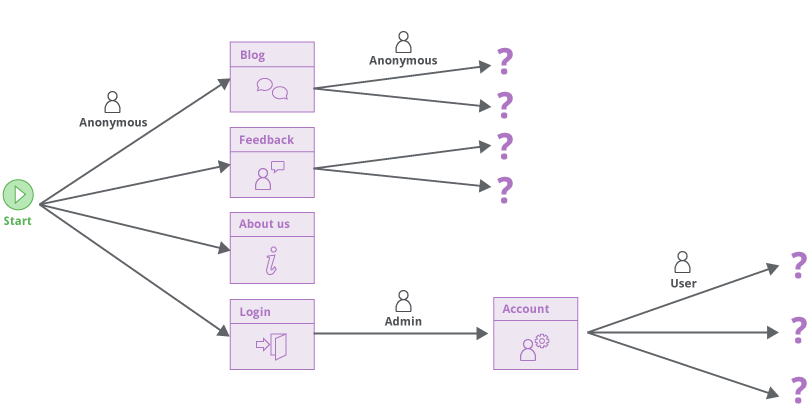

The crawler begins with an unauthenticated phase in which no credentials are submitted. When this is complete, Burp will have discovered any login and self-registration functions within the application.

If the application supports self-registration, Burp will by default attempt to register a user.

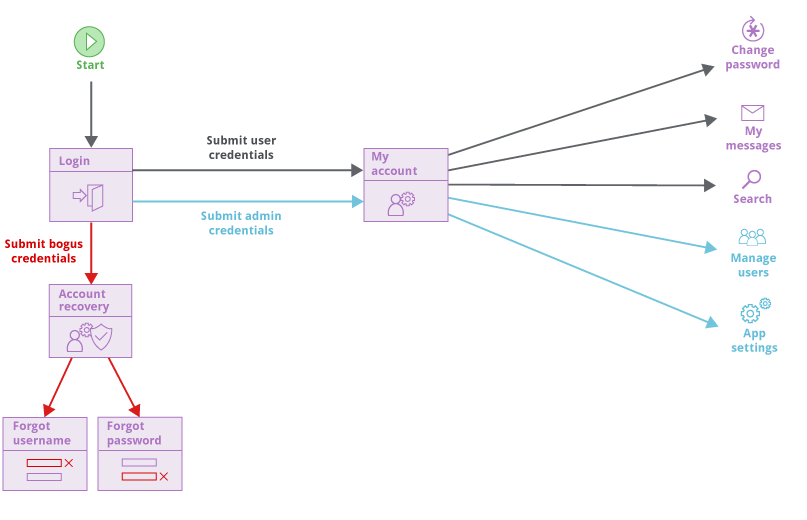

The crawler then proceeds to an authenticated phase. It will visit the login function multiple times and submit:

For each set of credentials that are submitted to the login, Burp will crawl the content that is discovered behind the login. This allows the crawler to reach different functionality that is available to different types of user:

Although the crawler normally employs multiple crawler agents in parallel, some applications prohibit concurrent login by the same user. Burp is able to detect this behavior, and will only perform a single concurrent login for each distinct user account:

The core approach of the new crawler is to construct a graph representing the navigational pathways through the application that users are able to take. This means that once content has been discovered in a given user context, it is straightforward for the crawler to revisit that content when it needs to, because the navigational pathway to reach that content includes a login using the required credentials.